- Topic1/3

12k Popularity

14k Popularity

20k Popularity

5k Popularity

20k Popularity

- Pin

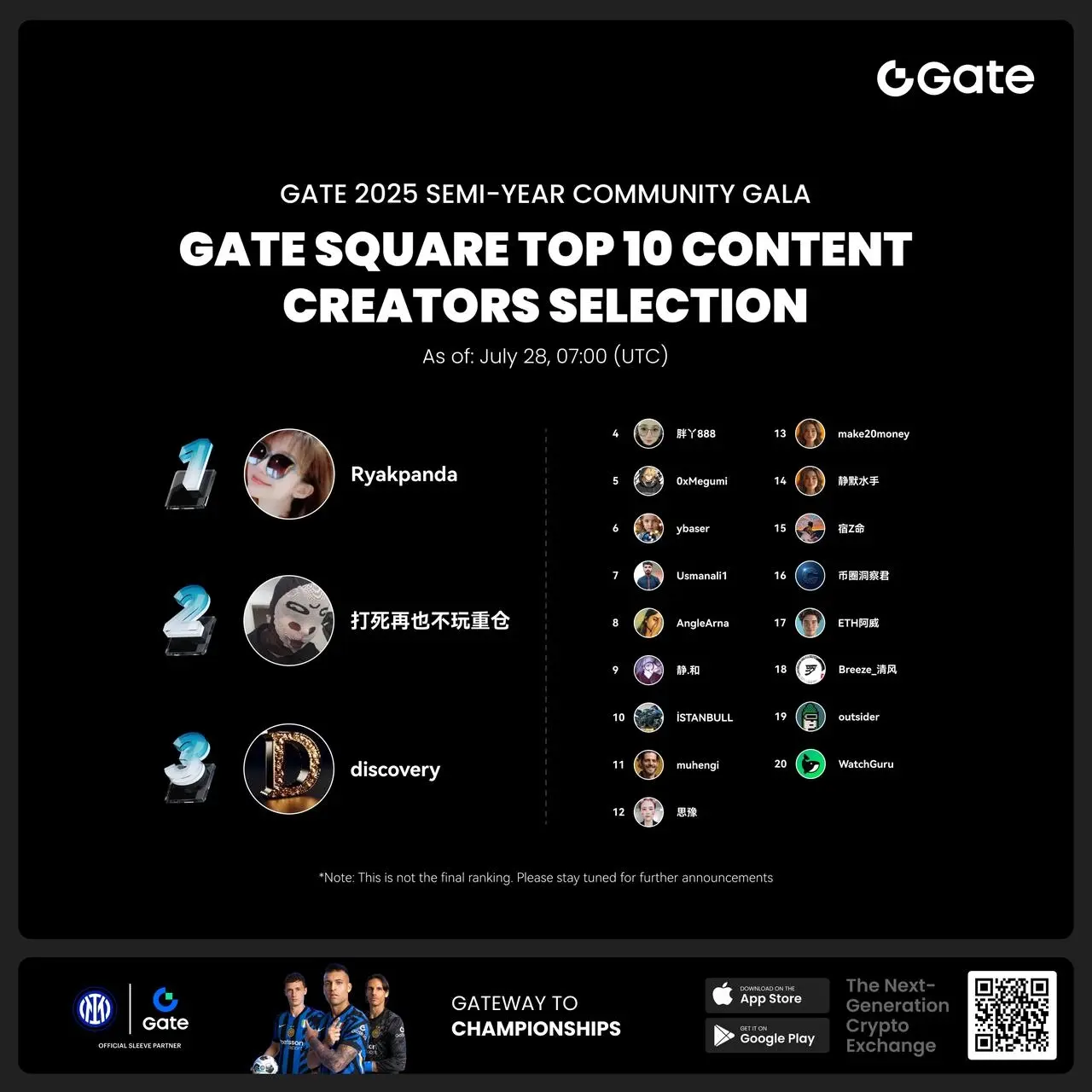

- #Gate 2025 Semi-Year Community Gala# voting is in progress! 🔥

Gate Square TOP 40 Creator Leaderboard is out

🙌 Vote to support your favorite creators: www.gate.com/activities/community-vote

Earn Votes by completing daily [Square] tasks. 30 delivered Votes = 1 lucky draw chance!

🎁 Win prizes like iPhone 16 Pro Max, Golden Bull Sculpture, Futures Voucher, and hot tokens.

The more you support, the higher your chances!

Vote to support creators now and win big!

https://www.gate.com/announcements/article/45974

- 🎉 Hey Gate Square friends! Non-stop perks and endless excitement—our hottest posting reward events are ongoing now! The more you post, the more you win. Don’t miss your exclusive goodies! 🚀

1️⃣ #ETH Hits 4800# | Market Analysis & Prediction: Boldly share your ETH predictions to showcase your insights! 10 lucky users will split a 0.1 ETH prize!

Details 👉 https://www.gate.com/post/status/12322612

2️⃣ #Creator Campaign Phase 2# |ZKWASM Topic: Share original content about ZKWASM or its trading activity on X or Gate Square to win a share of 4,000 ZKWASM!

Details 👉 https://www.gate.com/post/st

Foresight Ventures: A Rational View on Decentralized Computing Power Networks

Original Author: Yihan Xu, Foresight Ventures

TL;DR

1. Distributed Computing Power—Large Model Training

We are discussing the application of distributed computing power in training, and generally focus on the training of large language models. The main reason is that the training of small models does not require much computing power. In order to do distributed data privacy and a bunch of projects The problem is not cost-effective, it is better to solve it directly and centrally. The large language model has a huge demand for computing power, and it is now in the initial stage of the explosion. From 2012 to 2018, the computing demand of AI will double every 4 months, and now it is even more demanding for computing power. Concentrated points can predict the future; 5-8; years will still be a huge incremental demand.

While there are huge opportunities, problems also need to be seen clearly. Everyone knows that the scene is huge, but where are the specific challenges? Who can "target" these problems instead of blindly entering the game, which is the core of judging the excellent projects of this track.

;(NVIDIA NeMo Megatron Framework)

1. Overall training process

Take training a large model with 175 billion parameters as an example. Due to the huge size of the model, it needs to be trained in parallel on many "GPU" devices. Suppose there is a centralized computer room, there are; 100; GPUs, each device has; 32; GB; memory.

This process involves a large amount of data transfer and synchronization, which may become a bottleneck for training efficiency. Therefore, optimizing network bandwidth and latency, and using efficient parallel and synchronization strategies are very important for large-scale model training.

2. The bottleneck of communication overhead:

It should be noted that the communication bottleneck is also the reason why the current distributed computing power network cannot do large language model training.

Each node needs to exchange information frequently to work together, which creates communication overhead. For large language models, this problem is especially serious due to the large number of parameters of the model. The communication overhead is divided into these aspects:

Although there are some methods to reduce communication overhead, such as compression of parameters and gradients, efficient parallel strategies, etc., these methods may introduce additional computational burden or negatively affect the training effect of the model. Also, these methods cannot completely solve the communication overhead problem, especially in the case of poor network conditions or large distances between computing nodes.

As an example:

Decentralized distributed computing power network

The GPT-3; model has; 175 billion; billion parameters, and if we use single-precision floating point numbers (each parameter; 4; bytes) to represent these parameters, then storing these parameters requires ~; 700; GB; of memory. In distributed training, these parameters need to be frequently transmitted and updated between computing nodes.

Suppose there are; 100; computing nodes, and each node needs to update all parameters in each step, then each step needs to transmit about; 70; TB (700; GB*; 100;) of data. If we assume that a step takes ;1;s (very optimistic assumption), then every second ;70;TB; of data needs to be transferred. This demand for bandwidth already far exceeds that of most networks and is also a matter of feasibility.

In reality, due to communication delays and network congestion, the data transmission time may be much longer than;1;s. This means that computing nodes may need to spend a lot of time waiting for data transmission instead of performing actual calculations. This will greatly reduce the efficiency of training, and this reduction in efficiency cannot be resolved by waiting, but the difference between feasible and infeasible, which will make the entire training process infeasible.

Centralized computer room

Even in a centralized computer room environment, the training of large models still requires heavy communication optimization.

In a centralized computer room environment, high-performance computing devices are used as a cluster, connected through a high-speed network to share computing tasks. However, even when training a model with an extremely large number of parameters in such a high-speed network environment, the communication overhead is still a bottleneck, because the parameters and gradients of the model need to be frequently transmitted and updated between various computing devices.

As mentioned at the beginning, suppose there are; 100; computing nodes, each server has; 25; Gbps; network bandwidth. If each server needs to update all parameters in each training step, then each training step needs to transmit about; 700; GB; data needs ~; 224; seconds. By taking advantage of the centralized computer room, developers can optimize the network topology inside the data center and use technologies such as model parallelism to significantly reduce this time.

In contrast, if the same training is performed in a distributed environment, it is assumed that there are still; 100; computing nodes distributed all over the world, and the average network bandwidth of each node is only; 1; Gbps. In this case, transferring the same; 700; GB; data takes ~; 5600; seconds, much longer than in a centralized computer room. Also, due to network delays and congestion, the actual time required may be longer.

However, compared to the situation in a distributed computing power network, it is relatively easy to optimize the communication overhead in a centralized computer room environment. Because in a centralized computer room environment, computing devices are usually connected to the same high-speed network, and the bandwidth and delay of the network are relatively good. In a distributed computing power network, computing nodes may be distributed all over the world, and the network conditions may be relatively poor, which makes the problem of communication overhead more serious.

In the process of training GPT-3, OpenAI adopted a model parallel framework called "Megatron" to solve the problem of communication overhead. Megatron divides the parameters of the model and processes them in parallel among multiple GPUs, and each device is only responsible for storing and updating a part of the parameters, thereby reducing the amount of parameters that each device needs to process and reducing communication overhead. At the same time, a high-speed interconnection network is also used during training, and the length of the communication path is reduced by optimizing the network topology.

(Data used to train LLM models)

3. Why can't the distributed computing power network do these optimizations

It can be done, but compared with the centralized computer room, the effect of these optimizations is very limited.

Network topology optimization: In the centralized computer room, the network hardware and layout can be directly controlled, so the network topology can be designed and optimized according to the needs. However, in a distributed environment, computing nodes are distributed in different geographical locations, even one in China and one in the United States, and there is no way to directly control the network connection between them. Although software can be used to optimize the data transmission path, it is not as effective as directly optimizing the hardware network. At the same time, due to differences in geographical locations, network delays and bandwidths also vary greatly, which further limits the effect of network topology optimization.

Model parallelism: Model parallelism is a technology that divides the parameters of the model into multiple computing nodes, and improves the training speed through parallel processing. However, this method usually needs to transmit data between nodes frequently, so it has high requirements on network bandwidth and latency. In a centralized computer room, due to high network bandwidth and low latency, model parallelism can be very effective. However, in a distributed environment, model parallelism is greatly limited due to poor network conditions. ; ; ; ; ;

4. Data security and privacy challenges

Almost all links involving data processing and transmission may affect data security and privacy:

Data distribution: The training data needs to be distributed to each node participating in the calculation. Data in this link may be maliciously used/leaked on distributed nodes.

Model training: During the training process, each node will use its assigned data for calculation, and then output the update or gradient of the model parameters. During this process, if the calculation process of the node is stolen or the result is maliciously analyzed, data may also be leaked.

Parameter and Gradient Aggregation: The output of each node needs to be aggregated to update the global model, and the communication during the aggregation process may also leak information about the training data.

**What solutions are available for data privacy concerns? **

Summary

Each of the above methods has its applicable scenarios and limitations, and none of the methods can completely solve the data privacy problem in the large model training of distributed computing power network.

**Will ZK, which has high hopes, solve the data privacy problem in large model training? **

In theory; ZKP; can be used to ensure data privacy in distributed computing, allowing a node to prove that it has performed calculations according to regulations, but does not need to disclose actual input and output data.

But in fact, "ZKP" will face the following bottlenecks in the scenario of using large-scale distributed computing power network to train large models:

Summary

It will take several years of research and development to use "ZKP" for large-scale distributed computing networks to train large models, and it will also require more energy and resources from the academic community in this direction.

2. Distributed Computing Power—Model Reasoning

Another relatively large scenario of distributed computing power is model reasoning. According to our judgment on the development path of large models, the demand for model training will gradually slow down as the large models mature after passing a high point. Inference requirements will correspondingly increase exponentially with the maturity of large models and "AIGC".

Compared with training tasks, inference tasks usually have lower computational complexity and weaker data interaction, and are more suitable for distributed environments.

(Power LLM inference with NVIDIA Triton)

1. Challenge

Communication Delay:

In a distributed environment, communication between nodes is essential. In a decentralized distributed computing power network, nodes may be spread all over the world, so network latency can be a problem, especially for reasoning tasks that require real-time response.

Model Deployment and Update:

The model needs to be deployed to each node. If the model is updated, each node needs to update its model, which consumes a lot of network bandwidth and time.

Data Privacy:

Although inference tasks usually only require input data and models, and do not need to return a large amount of intermediate data and parameters, the input data may still contain sensitive information, such as users' personal information.

Model Security:

In a decentralized network, the model needs to be deployed on untrusted nodes, which will lead to the leakage of the model and lead to the problem of model property rights and abuse. This can also raise security and privacy concerns, if a model is used to process sensitive data, nodes can infer sensitive information by analyzing the behavior of the model.

QC:

Each node in a decentralized distributed computing power network may have different computing capabilities and resources, which may make it difficult to guarantee the performance and quality of inference tasks.

2. Feasibility

Computational complexity:

In the training phase, the model needs to iterate repeatedly. During the training process, it is necessary to calculate forward propagation and back propagation for each layer, including the calculation of activation function, calculation of loss function, calculation of gradient and update of weight. Therefore, the computational complexity of model training is high.

In the inference phase, only one forward pass is required to compute the prediction. For example, in; GPT-3;, it is necessary to convert the input text into a vector, and then perform forward propagation through each layer of the model (usually; Transformer; layer), and finally get the output probability distribution, and generate according to this distribution next word. In;GANs;, the model needs to generate an image based on the input noise vector. These operations only involve the forward propagation of the model, do not need to calculate gradients or update parameters, and have low computational complexity.

Data Interactivity:

During the inference phase, the model usually processes a single input rather than the large batch of data during training. The result of each inference only depends on the current input, not on other input or output, so there is no need for a large amount of data interaction, and the communication pressure is less.

Taking the generative image model as an example, assuming we use; GANs; to generate images, we only need to input a noise vector to the model, and then the model will generate a corresponding image. In this process, each input will only generate one output, and there is no dependency between outputs, so there is no need for data interaction.

Taking "GPT-3" as an example, each generation of the next word only requires the current text input and the state of the model, and does not need to interact with other inputs or outputs, so the requirement for data interactivity is also weak.

Summary

Regardless of whether it is a large language model or a generative image model, the computational complexity and data interactivity of reasoning tasks are relatively low, which is more suitable for decentralized distributed computing power networks, which is why most projects we see now In one direction of force.

3. Project

The technical threshold and technical breadth of a decentralized distributed computing power network are very high, and it also requires the support of hardware resources, so we have not seen too many attempts now. Take ;Together; and ;Gensyn.ai; for example:

1.Together

(RedPajama from Together)

Together; is an open source company that focuses on large models and is committed to decentralized; AI; computing power solutions, hoping that anyone can access and use it anywhere; AI. Together;just completed;Lux Capital;led;20;m USD;seed round of financing.

Together; co-founded by; Chris, Percy, Ce; the original intention is that large model training requires a large number of high-end; GPU; clusters and expensive expenditures, and these resources and model training capabilities are also concentrated in a few large companies.

From my point of view, a more reasonable entrepreneurial plan for distributed computing power is:

Step;1. Open source model

To implement model reasoning in a decentralized distributed computing power network, the prerequisite is that nodes must be able to obtain the model at low cost, that is to say, the model using the decentralized computing power network needs to be open source (if the model needs to be licensed in the corresponding If used below, it will increase the complexity and cost of the implementation). For example, chatgpt, as a non-open source model, is not suitable for execution on a decentralized computing power network.

Therefore, it can be speculated that the invisible barrier of a company that provides a decentralized computing power network needs to have strong large-scale model development and maintenance capabilities. Self-developed and open source a powerful "base model" can get rid of the dependence on third-party model open source to a certain extent, and solve the most basic problems of decentralized computing power network. At the same time, it is more conducive to proving that the computing power network can effectively carry out training and reasoning of large models.

And "Together" does the same. Recently released; based on; LLaMA;; language model.

Step;2. Distributed computing power lands on model reasoning

As mentioned in the above two sections, compared with model training, model inference has lower computational complexity and data interaction, and is more suitable for a decentralized distributed environment.

Based on the open source model, Together;'s R&D team has made a series of updates to the "RedPajama-INCITE-3; B; ;M;2 Pro;processor;MacBook Pro) models run more silky smooth. At the same time, although the scale of this model is small, its ability exceeds other models of the same scale, and it has been practically applied in legal, social and other scenarios.

Step;3. The implementation of distributed computing power in model training

(Overcoming Communication Bottlenecks for Decentralized Training; schematic diagram of computing power network)

From a medium to long-term perspective, although facing great challenges and technical bottlenecks, it must be the most attractive to undertake "AI" computing power requirements for large-scale model training. Together; at the beginning of its establishment, it began to lay out how to overcome the communication bottleneck in decentralized training. They also published a related paper on NeurIPS 2022: Overcoming Communication Bottlenecks for Decentralized Training. We can mainly summarize the following directions:

Scheduling Optimization

When training in a decentralized environment, it is important to assign communication-heavy tasks to devices with faster connections because the connections between nodes have different latencies and bandwidths. Together; by building a model to describe the cost of a specific scheduling strategy, better optimize scheduling strategies to minimize communication costs and maximize training throughput. Together; the team also found that even though the network was 100 times slower, the end-to-end training throughput was only 1.7 to 2.3 times slower. Therefore, it is interesting to catch up the gap between distributed networks and centralized clusters through scheduling optimization.

Communication compression optimization

Together; proposes communication compression for forward activations and backward gradients, introducing the "AQ-SGD" algorithm, which provides strict guarantees for stochastic gradient descent convergence. AQ-SGD; able to fine-tune large base models on slow networks (e.g.; 500 Mbps), only slower than end-to-end training performance on centralized networks (e.g.; 10 Gbps) without compression ;31%;. In addition, AQ-SGD; can also be combined with state-of-the-art gradient compression techniques (such as; QuantizedAdam) to achieve; 10%; end-to-end speed improvement.

Project Summary

Together; the team configuration is very comprehensive, the members all have a very strong academic background, and are supported by industry experts from large-scale model development, cloud computing to hardware optimization. And "Together" does show a long-term patient posture in path planning, from developing open source large models to testing idle computing power (such as; mac) in the distributed computing power network using model reasoning, and then to distributed computing. The layout of forces on large model training. — There is that kind of accumulation and thin hair feeling:);

But so far, we haven't seen "Together" too many research results in the incentive layer. I think this has the same importance as technology research and development, and it is a key factor to ensure the development of decentralized computing power network.

2.Gensyn.ai

;(Gensyn.ai)

From the technical path of "Together", we can roughly understand the implementation process of the decentralized computing power network in model training and reasoning, as well as the corresponding research and development priorities.

Another important point that cannot be ignored is the design of the incentive layer/consensus algorithm of the computing power network. For example, an excellent network needs to have:

Make sure the benefits are attractive enough;

Ensure that each miner gets the benefits he deserves, including anti-cheating and more pay for more work;

Ensure that tasks are directly and reasonably scheduled and allocated on different nodes, and there will not be a large number of idle nodes or overcrowding of some nodes;

The incentive algorithm is simple and efficient, and will not cause excessive system burden and delay;

……

See how;Gensyn.ai;does it:

First of all, the "solver" in the computing power network competes for the right to process the tasks submitted by the "user" through the "bid" method, and according to the scale of the task and the risk of being found to be cheating, the solver; needs to mortgage a certain amount.

Solver; generates multiple; checkpoints (to ensure the transparency and traceability of work) while updating; parameters;, and will periodically generate cryptographic encryption reasoning about tasks; proofs (proofs of work progress);

When the Solver; completes the work and produces a part of the calculation results, the protocol will choose a; verifier, verifier; will also pledge a certain amount (to ensure that the; verifier; performs the verification honestly), and based on the above provided; Part of the calculation results.

Through the "Merkle tree"-based data structure, locate the exact location where the calculation results differ. The entire verification operation will be on the chain, and cheaters will be deducted from the pledged amount.

Project Summary

The design of the incentive and verification algorithm makes; Gensyn.ai; does not need to replay all the results of the entire computing task during the verification process, but only needs to copy and verify a part of the results according to the provided proof, which greatly improves the verification efficiency. efficiency. At the same time, nodes only need to store part of the calculation results, which also reduces the consumption of storage space and computing resources. In addition, potential cheating nodes cannot predict which parts will be selected for verification, so this also reduces the risk of cheating;

This method of verifying differences and discovering cheaters can also quickly find the error in the calculation process without comparing the entire calculation result (starting from the root node of the "Merkle tree" and traversing down step by step), which is It is very effective in dealing with large-scale computing tasks.

In short, the design goal of Gensyn.ai's incentive/verification layer is: simple and efficient. However, it is currently limited to the theoretical level, and the specific implementation may face the following challenges:

4. Thinking about the future

The question of who needs a decentralized computing power network has not been verified. The application of idle computing power to large-scale model training that requires huge computing power resources is obviously the most; make sense is also the most imaginative space. But in fact, bottlenecks such as communication and privacy have to make us rethink:

Is there really hope for decentralized training of large models?

If you jump out of this consensus, "the most reasonable landing scenario", is it a big scenario to apply decentralized computing power to the training of small AI models? From a technical point of view, the current limiting factors have been solved due to the size and architecture of the model. At the same time, from the market point of view, we have always felt that the training of large models will be huge from now to the future, but small; AI; model Is the market unattractive?

I don't think so. Compared with large models, small "AI" models are easier to deploy and manage, and are more efficient in terms of processing speed and memory usage. In a large number of application scenarios, users or companies do not need the more general reasoning capabilities of large language models, but It is only concerned with a very fine-grained prediction target. Therefore, small "AI" models are still the more viable option in most scenarios and should not be prematurely ignored in the "fomo" tide of large models.

Reference

About Foresight Ventures

Foresight Ventures bets on the innovation process of cryptocurrency in the next few decades, and manages multiple funds under its management: VC; fund, secondary active management fund, multi-strategy; FOF, special purpose; S; fund "Foresight Secondary Fund l", total assets The scale of management exceeds; 4; million US dollars. Foresight Ventures adheres to the concept of "Unique, Independent, Aggressive, Long-term", and provides extensive support for projects through strong ecological forces. Its team comes from senior personnel from top financial and technology companies including Sequoia China, CICC, Google, Bitmain, etc.

Website:;

**Disclaimer: Foresight Ventures; all articles are not intended as investment advice. Investment is risky, please assess your personal risk tolerance and make investment decisions prudently. **